Member-only story

Azure Terraform Pipeline — DevOps

Terraform is an open-source infrastructure as code (IAC) tool that allows users to define and deploy infrastructure resources, such as servers, storage, and networking, using simple, human-readable configuration files.

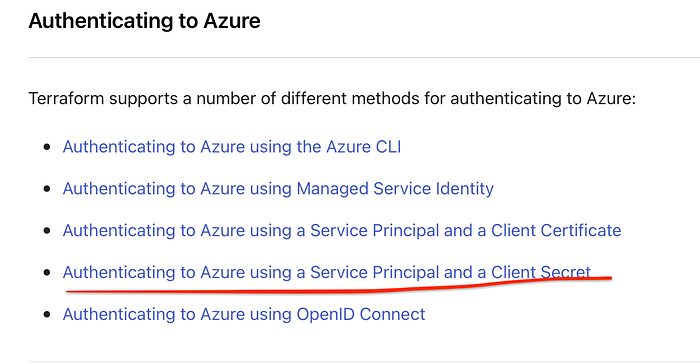

Azure Provider

The Azure Provider can be used to configure infrastructure in Microsoft Azure using the Azure Resource Manager API’s.

Docs: https://registry.terraform.io/providers/hashicorp/azurerm/latest/docs

Click → Service Principal and a Client Secret

buymeacoffee ☕ 👈 Click the link

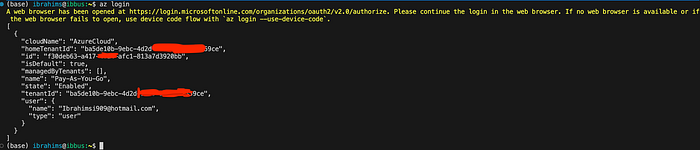

Log in to the CLI in VSCode. Automatically redirect the browser to enter your Azure credentials.

az login

Successfully logged in Azure DevOps portal via VSCode Terminal

Allow users to download data exported from the plan of a Run in a Terraform workspace.

export MSYS_NO_PATHCONV=1Organizations can use subscriptions to manage costs and the resources that are created by users, teams, and projects.

az ad sp create-for-rbac --role="Contributor" --scopes="/subscriptions/20000000-0000-0000-0000-000000000000"

An Azure service principal is an identity created for use with applications, hosted services, and automated tools to access Azure resources. This access is restricted by the roles assigned to the service principal, giving you control over which resources can be accessed and at which level.

Create a Service Principal